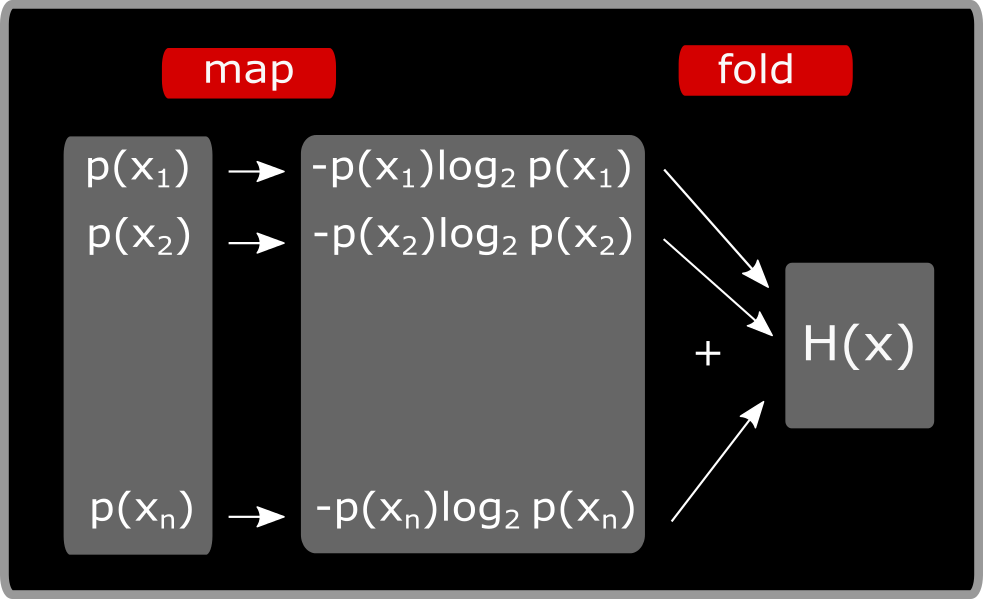

Entropy is the minimum number of bits required to describe one sample of a probability distribution. Now that we understand the goal of what we are trying to quantify, let's get into the specific definition. In the real world, we deal with much more complex probability distributions. In theory, we could use other units to describe information (for example nats, and dits), but since computers use bits to encode information, we use bits.īut what about probabilities that are more complicated? This is exactly why Claude Shannon's formalization is useful. Now you may be thinking "Why are bits used?" Well, we could simply send a 1 if it's sunny, and a 0 if it's rainy!Īs you can see, 1 bit of information is required to transmit what the weather is like from island Y, and in the case of island X, we don't need to transmit anything, because the weather is always 100% sunny. In this scenario, because there is a 50/50 chance of it being rainy/sunny, 1 bit of information is required. How many bits of information is required to transmitted this message? A message is transmitted from a weather station letting you know the weather of Y is sunny. There exists an island Y, where the weather is either sunny or rainy with a 50% probability. Since we are certain that the weather is sunny 100% of the time in X, zero bits of information is required. A message is transmitted from a weather station letting you know the weather of X is sunny. There exists an island X, where the weather is sunny 100% of the time.

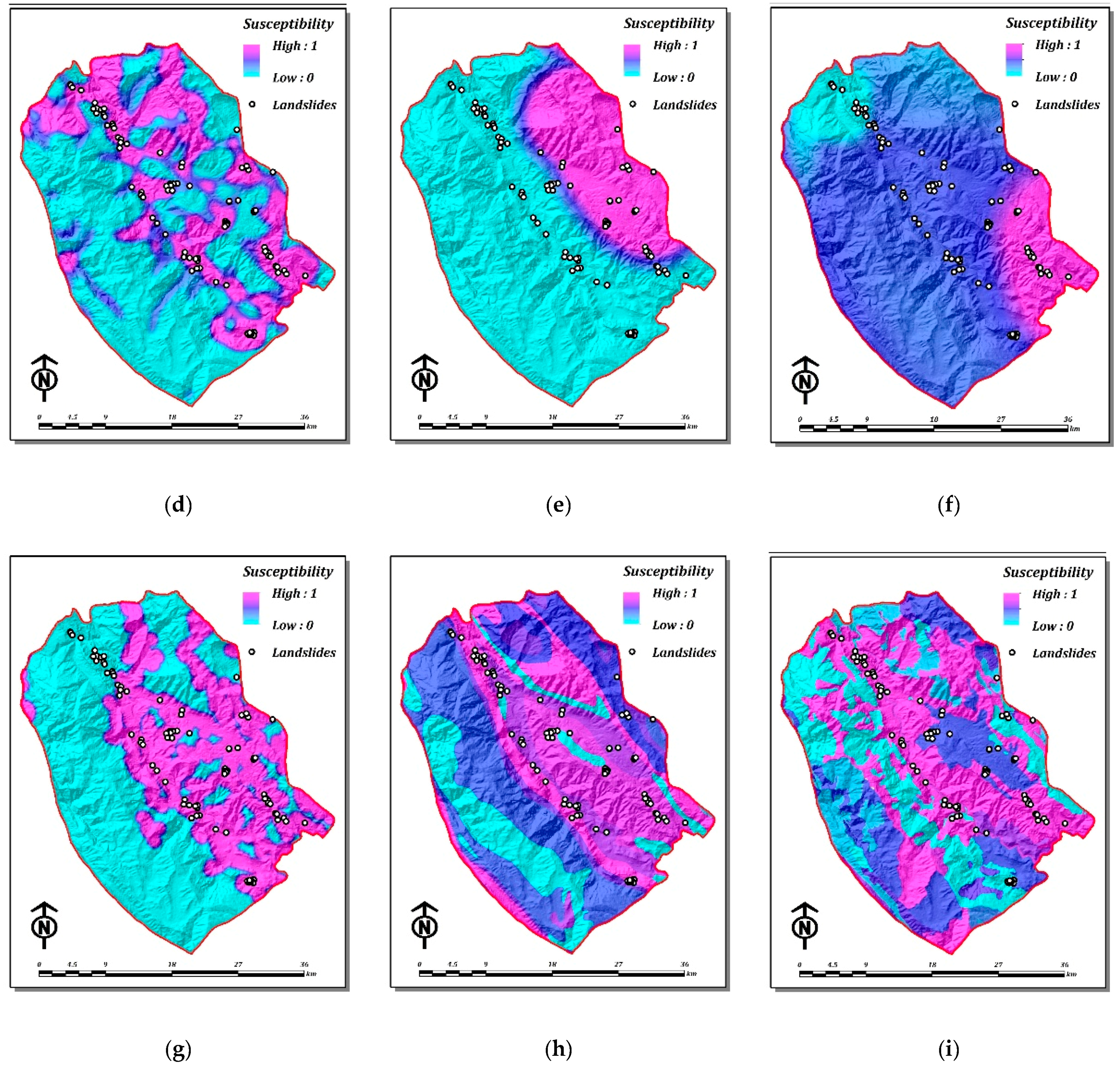

Lets walk through a couple examples first in order to grasp the intuition behind entropy before diving into the formalized definition. Furthermore, understanding entropy is a precursor to understanding other important concepts in statistical learning such as KL Divergence and Cross Entropy. With this, the concept of "entropy" as it pertains to information theory was born.įast forward to today, entropy is widely used in evaluating various machine learning models and describing probability distributions. In this article, he formalized a mathematical framework quantifying the amount of information needed to accurately send/receive messages, determined by the degree of "uncertainty" a message could contain. In 1948, Claude Shannon published an article called "A Mathematical Theory of Communication" which now serves as the basis behind the field of information theory. All rights reserved.Gradiently A Simple Explanation of Shannon Entropy This approach allows the quantification of the average information value of nucleotide positions, which can shed light on the coevolution of the canonical genetic code with the tRNA-protein translation mechanism.Īmino acids Genetic code Information theory RNA translation Shannon entropy.Ĭopyright © 2016 Elsevier Ltd. The relative importance of properties related to protein folding - like hydropathy and size - and function, including side-chain acidity, can also be estimated. By calculating the normalized mutual information, which measures the reduction in Shannon entropy, conveyed by single nucleotide messages, groupings that best leverage this aspect of fault tolerance in the code are identified. Each alphabet is taken as a separate system that partitions the 64 possible RNA codons, the microstates, into families, the macrostates. To evaluate these schemas objectively, a novel quantitative method is introduced based the inherent redundancy in the canonical genetic code. This fundamental insight is applied here for the first time to amino acid alphabets, which group the twenty common amino acids into families based on chemical and physical similarities. As with thermodynamic entropy, the Shannon entropy is only defined within a system that identifies at the outset the collections of possible messages, analogous to microstates, that will be considered indistinguishable macrostates. The Shannon entropy measures the expected information value of messages.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed